Ei Mix is a voice-driven AI system designed to interpret user intent and dynamically orchestrate personalized audio sessions. The platform integrates natural language processing, content selection logic, and real-time audio processing to deliver structured audio responses based on emotional context.

Ei Mix – Innovation Pitch Deck

Explore the interactive Gamma presentation of my SIP Project:

V. 1.3

Open Fullscreen

V. 1.1

Open Fullscreen

🔬 Core Innovation (Proof Statement)

Primary Innovation Claim

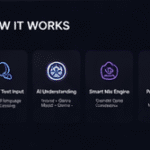

Ei Mix validates a voice-driven interaction pipeline consisting of:

• Natural language user input

• Emotional intent classification via NLP

• AI-driven content orchestration

• Real-time audio processing and delivery

The innovation lies in demonstrating an integrated system where emotional intent directly drives structured audio response generation through modular AI components.

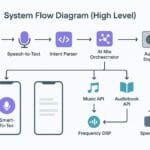

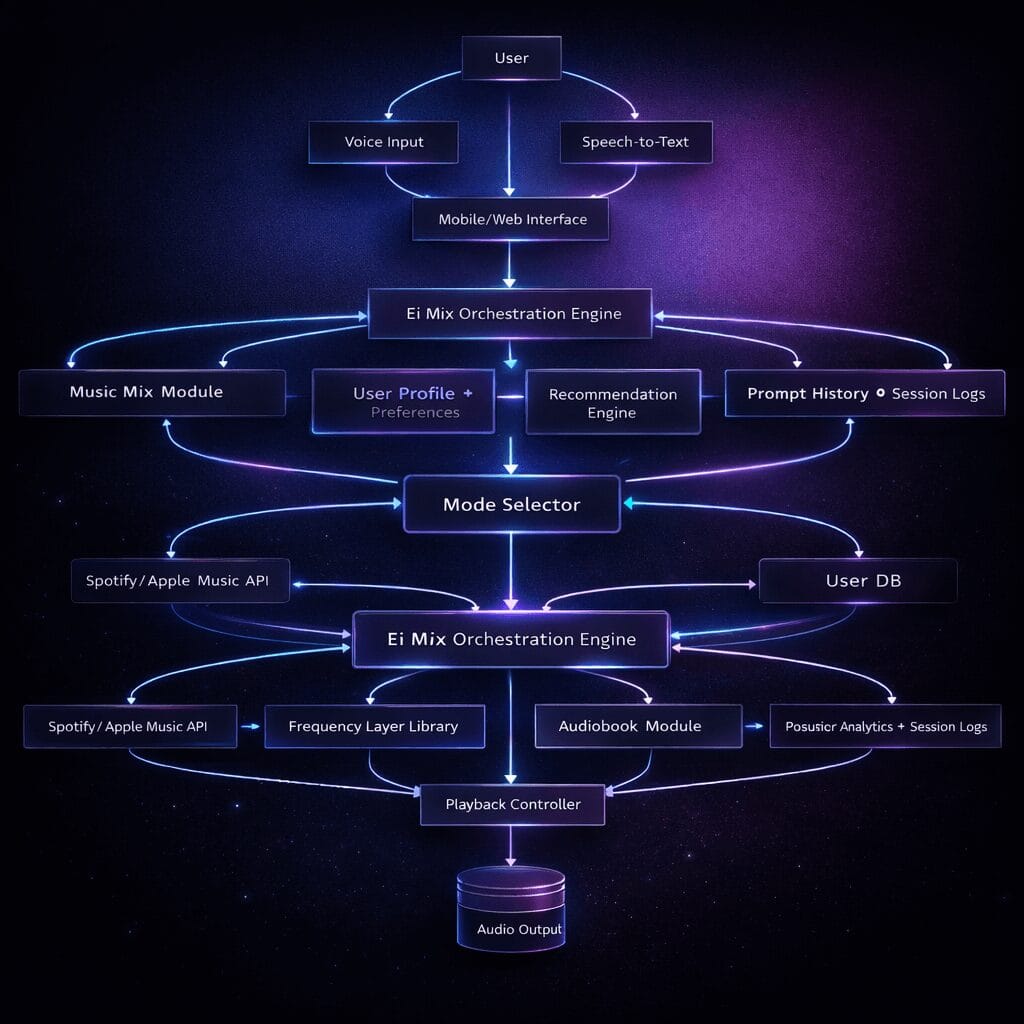

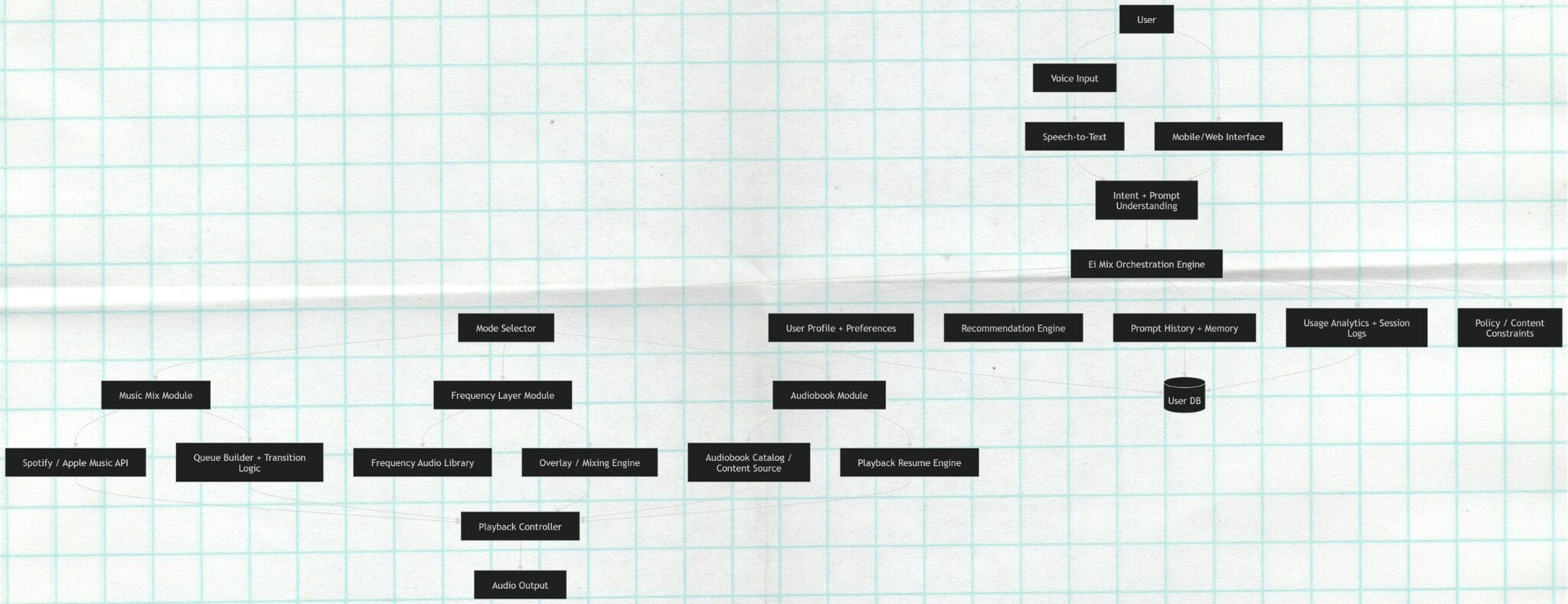

🏗️ Architecture & Workflow

System Architecture Overview

Ei Mix uses a modular architecture, separating:

• UI & Voice Input Layer

• NLP Intent Classification

• AI Mix Orchestrator

• External Media APIs

• Audio Processing Engine

• Output Layer

This modular separation enables independent component testing, scalable deployment, and iterative system refinement.

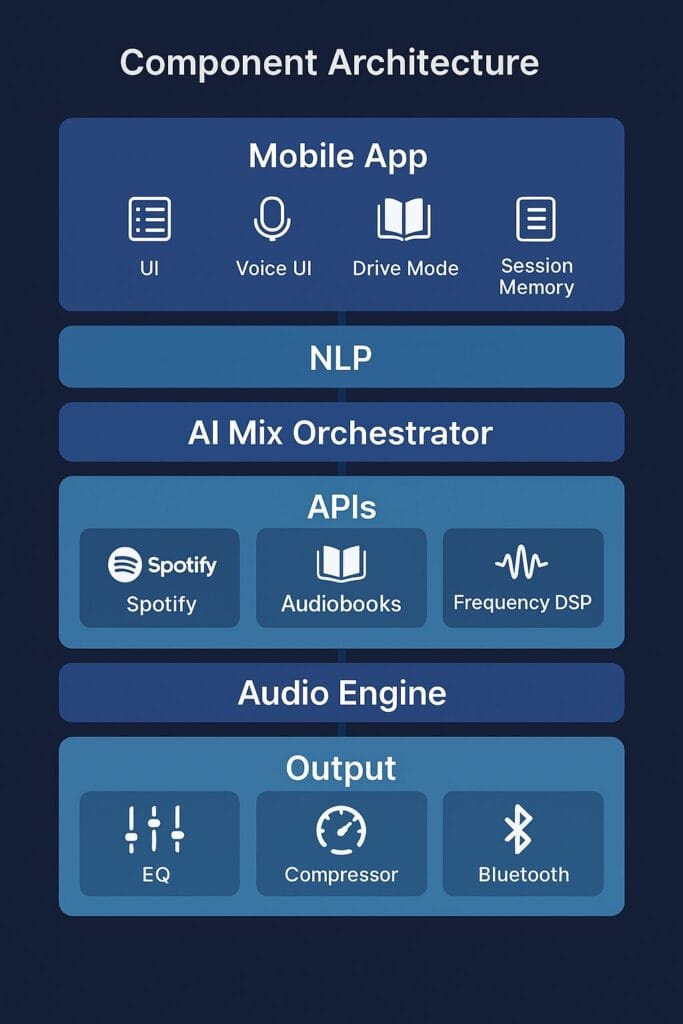

Under the hood, Ei-Mix’s architecture is composed of several interacting modules working in unison to deliver the seamless experience. The Component Architecture diagram above illustrates the major building blocks of Ei-Mix. At the top, the Mobile App provides the user interface (UI) and handles voice interactions.

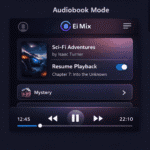

Notably, it includes a Voice UI for speech input and a dedicated Drive Mode for safe operation in vehicles, as well as Session Memory to keep track of user context during a session. The user’s request is processed by the NLP module, which interprets the prompt (e.g., detecting that “I’m feeling stressed” indicates anxiety). Next, the AI Mix Orchestrator (the brain of the system) decides which content to fetch and in what sequence. It interfaces with external APIs, for instance, calling Spotify’s API for music, an Audiobooks API for spoken word content, or utilizing a Frequency DSP service for audio signal processing tasks.

The content from these sources is pipelined through the Audio Engine, where it undergoes enhancements such as equalization (EQ) and compression and is prepared for output. Finally, the processed audio is delivered to the user as the output. Ei-Mix supports output through various endpoints, including device speakers or Bluetooth (useful for connecting to car audio systems). This modular architecture ensures that each component (from NLP to Audio Engine) can be improved or scaled independently, and it highlights how multiple technologies—mobile development, AI, cloud services, and digital signal processing—are integrated into this project.

The typical workflow in Ei-Mix begins when the user opens the app (or invokes it via a voice trigger) and provides an input, either by speaking or typing. The NLP module analyzes the input to infer the user’s emotional state or intent. For example, a prompt like “I can’t concentrate” might be classified as needing a focus-enhancing audio session. The orchestrator then selects content aligned with that need: it might choose an instrumental concentration playlist from Spotify or a short mindfulness exercise for focus. It calls the respective APIs, gathers the audio tracks, and arranges them.

Before playback, the audio engine normalizes volumes and ensures a smooth mix. The app then plays the curated stream to the user, who can listen and get the benefit of multiple resources (music, meditation, and narration) without having to switch between different apps themselves. In essence, Ei-Mix streamlines the prompt-to-playback process into one intelligent pipeline that saves the user time and effort while providing emotional support on demand.

🧪 Current Validation Focus

The current development phase is focused on validating the minimum viable innovation pathway: voice-to-audio intelligent orchestration. Rather than expanding features, the scope has been narrowed to ensure the primary interaction pipeline functions correctly before scaling. This approach prioritizes measurable innovation validation before feature expansion.

Developing Ei-Mix has been a rewarding challenge that required me to blend skills from across my technical repertoire, from programming the mobile app (using frameworks for cross-platform development) to implementing machine learning models for NLP intent detection and orchestrating cloud APIs.

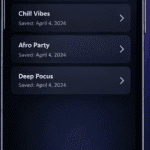

Throughout the project, I applied software engineering best practices: using version control (GitHub) to manage code, testing components (such as the NLP classifier and API integrations) iteratively, and designing with scalability in mind. The current prototype successfully demonstrates the core concept with a focus on two use cases (stress relief and focus enhancement). For the class presentation, I highlighted how Ei-Mix meets various degree objectives, such as AI integration, multi-language development, and data-driven design, proving it’s not just an idea but a functional project.

Overview & Inspiration

Modern life is fast-paced and stressful, especially for young professionals and students who often struggle with maintaining emotional wellness. Whether it’s anxiety during a commute or difficulty staying calm under pressure, many people turn to disparate apps, music streaming, meditation guides, and audiobooks to cope. The inspiration for Ei-Mix came from this common scenario: what if one intelligent platform could mix these resources on the fly, tailored to how you feel in the moment? The project explores how AI-driven audio orchestration can support emotional regulation by dynamically adapting content to user-reported states. This project extends beyond academic requirements by exploring practical applications of AI-driven audio orchestration in real-world contexts.

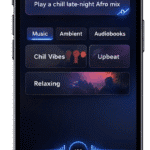

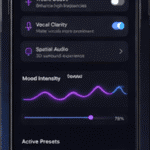

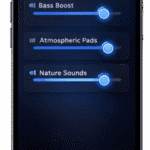

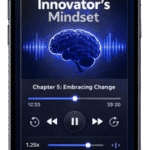

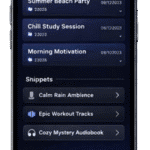

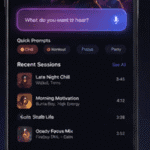

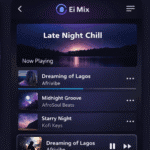

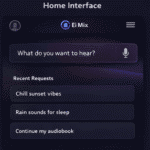

UI mockups

Imagine a user stuck in stressful traffic after a long day, feeling anxious and overwhelmed. Ei-Mix acts as a smart companion in moments like these. For example, a young driver experiencing frustration might launch the Ei-Mix app and simply say, “I’m feeling stressed.” The illustration above depicts such a scenario: the user is upset and seeking relief, with various apps (music, meditation, audiobooks) traditionally used separately. Ei-Mix streamlines this into one platform. Using natural language processing, the app understands the user’s emotional state and immediately responds with a tailored audio mix – perhaps a calming guided meditation from Calm, a soothing playlist from Spotify, or an uplifting audiobook excerpt. By intelligently curating content, Ei-Mix helps users regain calm and build emotional resilience, transforming emotional management into a personalized, on-demand experience.

Solution & Key Features

Ei Mix is implemented as a mobile-based AI system that processes user intent and delivers curated audio sequences aligned with detected emotional context.

Key features of the project include:

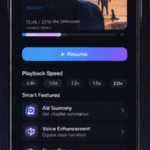

Mobile App & Drive Mode

The front-end is a robust mobile app interface with both standard UI and specialized modes. In normal mode, users can browse a library of emotional exercises or manually choose content. Ei-Mix also features a Drive Mode – a simplified, voice-centric interface optimized for safe use while driving. In Drive Mode, big buttons, voice commands, and auditory feedback let users get help without taking their eyes off the road or hands off the wheel. This mode was implemented to acknowledge real scenarios where emotional support might be needed (such as road stress) and to do so in a safe, hands-free manner.

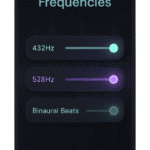

Real-Time Audio Processing

To ensure a smooth listening experience, Ei-Mix includes an advanced Audio Engine pipeline. All fetched audio content goes through processing steps like volume normalization and equalization so that transitions between a song, a narration, or a meditation track feel seamless and consistent in loudness and quality. The audio engine can apply effects (EQ, compression) and even adjust frequency ranges (via a DSP module) to match the user’s environment – for instance, emphasizing calming frequencies or ensuring clarity over car noise.

Natural Language Understanding

Users can interact with Ei-Mix through a voice UI or text prompt. The app employs NLP to interpret user inputs like mood descriptions or requests (e.g., “I feel anxious” or “Help me focus”) and determine the appropriate response or content category. This human-like understanding allows for a hands-free, intuitive experience – crucial if the user is driving or unable to navigate menus.

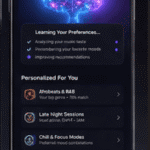

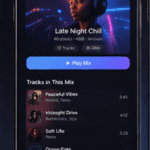

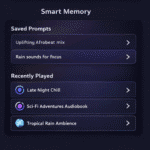

Personalized Audio Curation

Based on the user’s prompt and emotional context, the AI Mix Orchestrator component fetches content from multiple sources. Ei-Mix integrates with third-party media APIs – for example, pulling calming music via the Spotify API, retrieving a relevant chapter from an audiobook service, or selecting a guided relaxation exercise. It doesn’t just play a single track; it intelligently composes a mix or sequence that suits the user’s current needs. Over time, the system can learn user preferences (using session memory and historical data) to refine recommendations, making the experience increasingly personalized the more you use it.

Cloud Integration & Scalability

Ei-Mix is built with a cloud-backed architecture. User profiles, session data, and content libraries are stored securely in the cloud, allowing the AI models (for NLP and recommendation) to be updated continuously and scaled to many users. The design considers future growth – for example, the architecture can incorporate more content providers or even IoT sensor data (like heart rate from wearables) to further tailor the experience. Security and privacy are also taken seriously: personal data and usage patterns are protected, and sensitive computations can be done on-device when possible to minimize cloud data exposure.